Small Language Models 2025: Complete Guide for SMEs

TL;DR: Discover how Small Language Models (SLMs) reduce AI costs by 90% while enabling offline operations. Complete 2025 implementation guide for SME leaders.

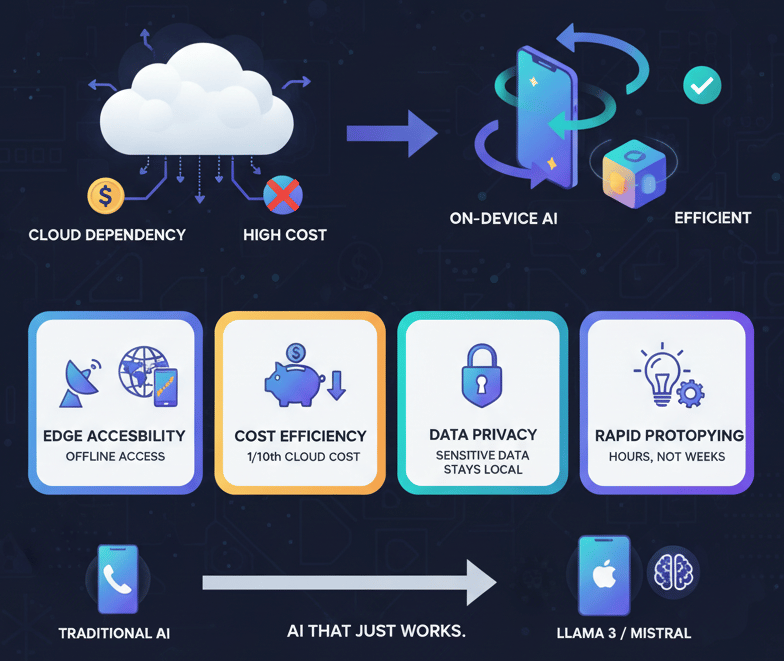

Quick Take: Small Language Models (SLMs) deliver enterprise AI capabilities on local devices with 90% cost reduction versus cloud solutions. Edge deployment eliminates latency, ensures data privacy, and enables offline AI operations for field teams and remote workflows.

Small but Mighty: The Rise of Small Language Models

Let me cut to the chase: you don't need a hundred-billion-parameter model in the cloud to get real business value. In fact, the latest trend is Small Language Models (SLMs) that run right on your phone or edge device, and they're transforming who can use AI and where.

Take, for example, Meta's release of Llama 3.2, which includes compact variants with one and three billion parameters. These models fit on a modern laptop or even a high-end smartphone. You get faster response times, lower costs, and zero dependency on cloud uptime or bandwidth. Mistral AI followed models optimized for local deployment, striking an excellent balance between size and capability.

Why Small Language Models Matter for Your Business

Here's why you should care today:

- Edge Accessibility — With SLMs, your sales reps in remote areas can run AI-driven product demos offline, even where connectivity is spotty.

- Cost Efficiency — Running a small model at your office avoids hefty cloud compute bills. On-premise inference costs one-tenth of comparable cloud usage.

- Data Privacy — Sensitive data stays on device. No legal headaches over sending customer information to third-party servers.

- Rapid Prototyping — Spin up a private SLM for internal Q&A or document summarization in hours, not weeks.

If you've been waiting for "AI that just works," this is it. Apple Intelligence—built into iOS—is another example of SLMs democratizing AI for everyday workflows, from summarizing notes to translating text in real time.

Your Implementation Strategy

Your next move: Identify a use case where cloud costs or latency are blocking you. Maybe it's field service teams, retail kiosks, or executive assistants on the go. Deploy an SLM like Llama 3 small or Mistral Compact. Measure speed gains and cost savings. One practical win here lays the groundwork for broader AI adoption across your organization.

Let's keep building this together—small models, big impact.

Originally published at First AI Movers. Written by Dr. Hernani Costa, Founder and CEO of First AI Movers.

Subscribe to First AI Movers for daily AI insights and practical automation strategies for EU SME leaders. First AI Movers is part of Core Ventures.

Ready to automate your business? Book a call today!